Autoencoder Feature Extraction for Classification

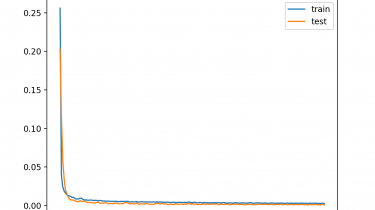

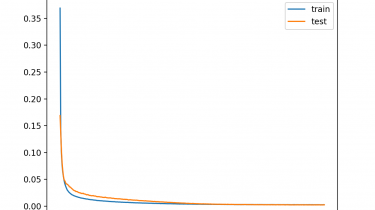

Autoencoder is a type of neural network that can be used to learn a compressed representation of raw data. An autoencoder is composed of an encoder and a decoder sub-models. The encoder compresses the input and the decoder attempts to recreate the input from the compressed version provided by the encoder. After training, the encoder model is saved and the decoder is discarded. The encoder can then be used as a data preparation technique to perform feature extraction on raw […]

Read more