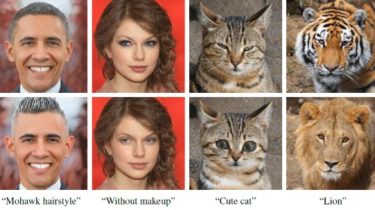

StyleCLIP: Text-Driven Manipulation of StyleGAN Imagery

StyleCLIP: Text-Driven Manipulation of StyleGAN Imagery (ICCV 2021 Oral) StyleCLIP: Text-Driven Manipulation of StyleGAN ImageryOr Patashnik*, Zongze Wu*, Eli Shechtman, Daniel Cohen-Or, Dani Lischinski*Equal contribution, ordered alphabeticallyhttps://arxiv.org/abs/2103.17249 Abstract: Inspired by the ability of StyleGAN to generate highly realistic images in a variety of domains, much recent work has focused on understanding how to use the latent spaces of StyleGAN to manipulate generated and real images. However, discovering semantically meaningful latent manipulations typically involves painstaking human examination of the many degrees […]

Read more