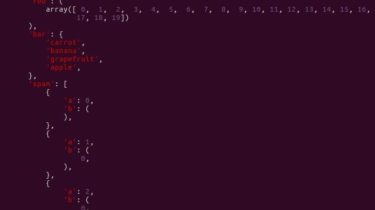

A python framework for interaction with time series data

Machine Learning and Data Analytics Graphical User Interface The current version is not stable and might crash unexpectedly! What is it? The MaD GUI is a framework for processing time series data. Its use-cases include visualization, annotation (manual or automated), and algorithmic processing of visualized data and annotations. How do I use it? By clicking on the images below, you will be redirected to YouTube. In case you want to follow along on your own machine, check out the section […]

Read more