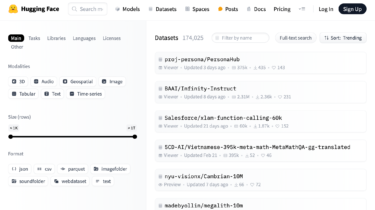

Data Is Better Together: A Look Back and Forward

For the past few months, we have been working on the Data Is Better Together initiative. With this collaboration between Hugging Face and Argilla and the support of the open-source ML community, our goal has been to empower the open-source community to create impactful datasets collectively. Now, we have decided to move forward with the same goal. To provide an overview of our achievements and tasks where everyone can contribute, we organized it into two sections: community efforts and cookbook […]

Read more