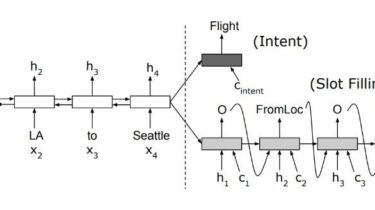

Attention-Based Recurrent Neural Network Models for Joint Intent Detection and Slot Filling

Pytorch implementation of “Attention-Based Recurrent Neural Network Models for Joint Intent Detection and Slot Filling” (https://arxiv.org/pdf/1609.01454.pdf) Intent prediction and slot filling are performed in two branches based on Encoder-Decoder model. dataset (Atis) You can get data from here Requirements Train python3 train.py –data_path ‘your data path e.g. ./data/atis-2.train.w-intent.iob’ Result GitHub https://github.com/DSKSD/RNN-for-Joint-NLU

Read more