Opinion Classification with Kili and HuggingFace AutoTrain

Understanding your users’ needs is crucial in any user-related business. But it also requires a lot of hard work and analysis, which is quite expensive. Why not leverage Machine Learning then? With much less coding by using Auto ML. In this article, we will leverage HuggingFace AutoTrain and Kili to build an active learning pipeline for text classification. Kili

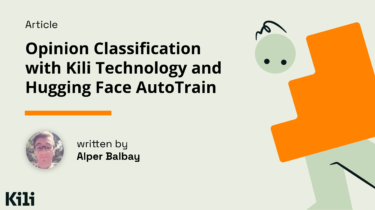

Read moreAccelerate Large Model Training using PyTorch Fully Sharded Data Parallel

In this post we will look at how we can leverage Accelerate Library for training large models which enables users to leverage the latest features of PyTorch FullyShardedDataParallel (FSDP).

Read moreAn Introduction to Deep Reinforcement Learning

⚠️ A new updated version of this article is available here 👉 https://huggingface.co/deep-rl-course/unit1/introduction This article is part of the Deep Reinforcement Learning Class. A free course from beginner to expert. Check the syllabus here. ⚠️

Read moreWelcome fastai to the Hugging Face Hub

Few have done as much as the fast.ai ecosystem to make Deep Learning accessible. Our mission at Hugging Face is to democratize good Machine Learning. Let’s make exclusivity in access to Machine Learning, including pre-trained models, a thing of the past and let’s push this amazing field even further. fastai is

Read moreWe Raised $100 Million for Open & Collaborative Machine Learning 🚀

Today we have some exciting news to share! Hugging Face has raised $100 Million in Series C funding 🔥🔥🔥 led by Lux Capital with major participations from Sequoia, Coatue and support of existing investors Addition, a_capital, SV Angel, Betaworks, AIX Ventures, Kevin Durant, Rich Kleiman from Thirty Five Ventures, Olivier Pomel (co-founder & CEO at Datadog) and more.

Read moreAccelerated Inference with Optimum and Transformers Pipelines

Inference has landed in Optimum with support for Hugging Face Transformers pipelines, including text-generation using ONNX Runtime. The adoption of BERT and Transformers continues to grow. Transformer-based models are now not only achieving state-of-the-art performance in Natural Language Processing but also for Computer Vision, Speech, and Time-Series. 💬 🖼 🎤 ⏳ Companies are now moving from the experimentation and research

Read moreStudent Ambassador Program’s call for applications is open!

As an open-source company democratizing machine learning, Hugging Face believes it is essential to teach open-source ML to people from all backgrounds worldwide. We aim to teach machine learning to 5 million people by 2023. Are you studying machine learning and/or already evangelizing your community with ML? Do you want to be a part of our ML democratization efforts and show your

Read more