Leveraging Pre-trained Language Model Checkpoints for Encoder-Decoder Models

Transformer-based encoder-decoder models were proposed in Vaswani et al. (2017) and have recently experienced a surge of interest, e.g. Lewis et al. (2019), Raffel et al. (2019), Zhang et al. (2020), Zaheer et al. (2020), Yan et al. (2020). Similar

Read moreHow we sped up transformer inference 100x for 🤗 API customers

🤗 Transformers has become the default library for data scientists all around the world to explore state of the art NLP models and build new NLP features. With over 5,000 pre-trained and fine-tuned models available, in over 250 languages, it is a rich playground, easily accessible whichever framework you are working in. While experimenting with models in 🤗 Transformers is easy, deploying

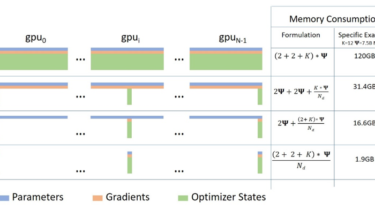

Read moreFit More and Train Faster With ZeRO via DeepSpeed and FairScale

A guest blog post by Hugging Face fellow Stas Bekman As recent Machine Learning models have been growing much faster than the amount of GPU memory added to newly released cards, many users are unable to train or even just load some of those huge models onto their hardware. While there is an ongoing effort to distill some of those huge models

Read moreFaster TensorFlow models in Hugging Face Transformers

In the last few months, the Hugging Face team has been working hard on improving Transformers’ TensorFlow models to make them more robust and faster. The recent improvements are mainly focused on two aspects: Computational performance: BERT, RoBERTa, ELECTRA and MPNet have been improved in order to have a much faster computation time. This

Read moreHugging Face on PyTorch / XLA TPUs: Faster and cheaper training

Training Your Favorite Transformers on Cloud TPUs using PyTorch / XLA The PyTorch-TPU project originated

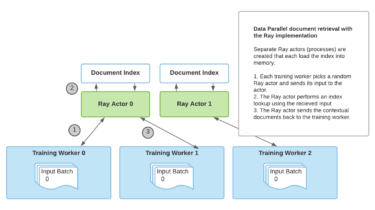

Read moreRetrieval Augmented Generation with Huggingface Transformers and Ray

A guest blog post by Amog Kamsetty from the Anyscale team Huggingface Transformers recently added the Retrieval Augmented Generation (RAG) model, a new NLP architecture that leverages external documents (like Wikipedia) to augment its knowledge and achieve state of

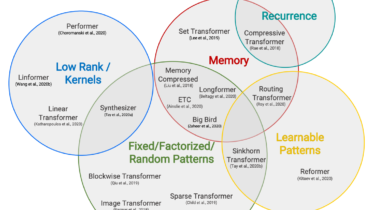

Read moreHugging Face Reads, Feb. 2021 – Long-range Transformers

Efficient Transformers taxonomy from Efficient Transformers: a Survey by Tay et al. Co-written by Teven Le Scao, Patrick Von Platen, Suraj Patil, Yacine Jernite and Victor Sanh. Each month, we will choose a topic to focus on, reading a set of four papers recently published on the subject. We will then write a short blog post summarizing

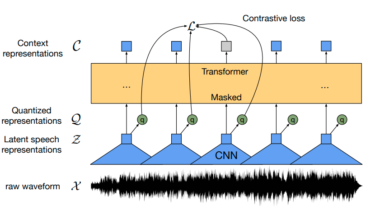

Read moreFine-Tune Wav2Vec2 for English ASR with 🤗 Transformers

Wav2Vec2 is a pretrained model for Automatic Speech Recognition (ASR) and was released in September 2020 by Alexei Baevski, Michael Auli, and Alex Conneau. Using a novel contrastive pretraining objective, Wav2Vec2 learns powerful speech representations from more than 50.000 hours of unlabeled speech. Similar, to BERT’s masked language

Read more