Dynamic Ensemble Selection (DES) for Classification in Python

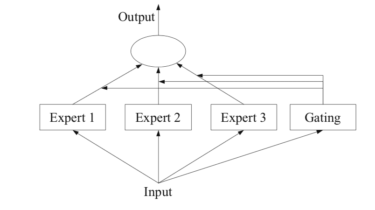

Dynamic ensemble selection is an ensemble learning technique that automatically selects a subset of ensemble members just-in-time when making a prediction. The technique involves fitting multiple machine learning models on the training dataset, then selecting the models that are expected to perform best when making a prediction for a specific new example, based on the details of the example to be predicted. This can be achieved using a k-nearest neighbor model to locate examples in the training dataset that are […]

Read more