Iterated Local Search From Scratch in Python

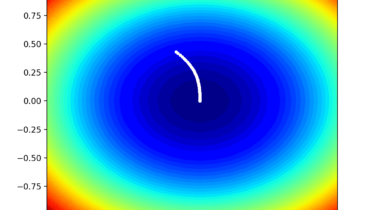

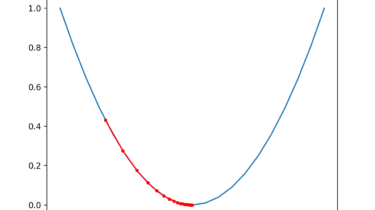

Iterated Local Search is a stochastic global optimization algorithm. It involves the repeated application of a local search algorithm to modified versions of a good solution found previously. In this way, it is like a clever version of the stochastic hill climbing with random restarts algorithm. The intuition behind the algorithm is that random restarts can help to locate many local optima in a problem and that better local optima are often close to other local optima. Therefore modest perturbations […]

Read more