A Generalization of Transformer Networks to Graphs

Source code for the paper “A Generalization of Transformer Networks to Graphs” by Vijay Prakash Dwivedi and Xavier Bresson, at AAAI’21 Workshop on Deep Learning on Graphs: Methods and Applications (DLG-AAAI’21).

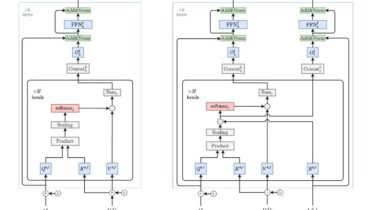

We propose a generalization of transformer neural network architecture for arbitrary graphs: Graph Transformer.

Compared to the Standard Transformer, the highlights of the presented architecture are:

- The attention mechanism is a function of neighborhood connectivity for each node in the graph.

- The position encoding is represented by Laplacian eigenvectors, which naturally generalize the sinusoidal positional encodings often used in NLP.

- The layer normalization is replaced by a batch normalization layer.

- The architecture is extended to have edge representation, which can be critical to tasks with rich information on the edges, or pairwise