VidTok introduces compact, efficient tokenization to enhance AI video processing

Every day, countless videos are uploaded and processed online, putting enormous strain on computational resources. The problem isn’t just the sheer volume of data—it’s how this data is structured. Videos consist of raw pixel data, where neighboring pixels often store nearly identical information. This redundancy wastes resources, making it harder for systems to process visual content effectively and efficiently.

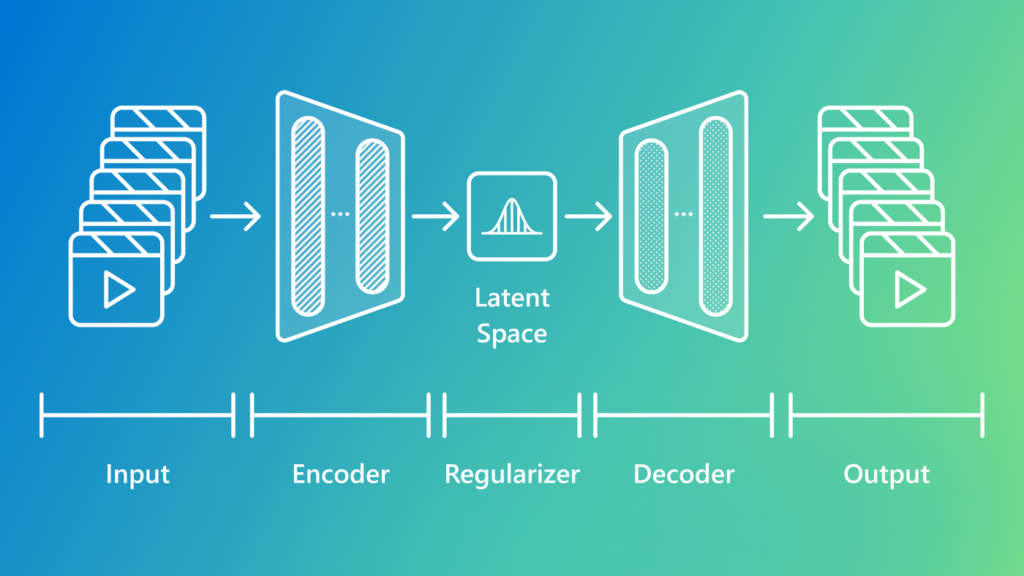

To tackle this, we’ve developed a new approach to compress visual data into a