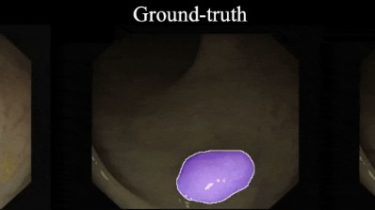

Progressively Normalized Self-Attention Network for Video Polyp Segmentation

PNS-Net

This repository provides code for paper”Progressively Normalized Self-Attention Network for Video Polyp Segmentation” published at the MICCAI-2021 conference (arXiv Version | 中文版). If you have any questions about our paper, feel free to contact me. And if you like our PNS-Net or evaluation toolbox for your personal research, please cite this paper (BibTeX).

Features

- Hyper Real-time Speed: Our method, named Progressively Normalized Self-Attention Network (PNS-Net), can efficiently learn representations from polyp videos with real-time speed (~140fps) on a single NVIDIA RTX 2080 GPU without any post-processing techniques (e.g., Dense-CRF).

- Plug-and-Play Module: The proposed core module, termed Normalized Self-attention (NS), utilizes channel split,query-dependent, and normalization rules to reduce the computational cost and improve the accuracy, respectively. Note that this module can be