A Differentiable Loss Function for Time-Series in CUDA

Soft DTW Loss Function for PyTorch in CUDA

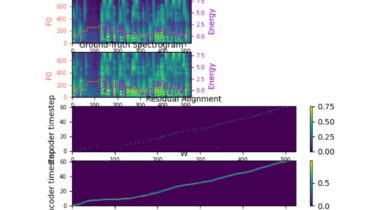

This is a Pytorch Implementation of Soft-DTW: a Differentiable Loss Function for Time-Series which is batch supported computation, CUDA-friendly, and feasible to use as a final loss. I can confirm that you can train a (sequential) model with this as a final loss! The following image shows training logs of a TTS model using the Soft-DTW Loss Function.

There are some previous implementations:

But they are either not supported by CUDA-friendly batch computation or not considering the jacobean w.r.t input matrix, which is necessary to be used as a final loss in recent deep learning frameworks. In the current implementation, all conditions are satisfied.

Usage

Same as Maghoumi’s pytorch-softdtw-cuda:

from