Official implementation for TransDA

Official implementation for TransDA

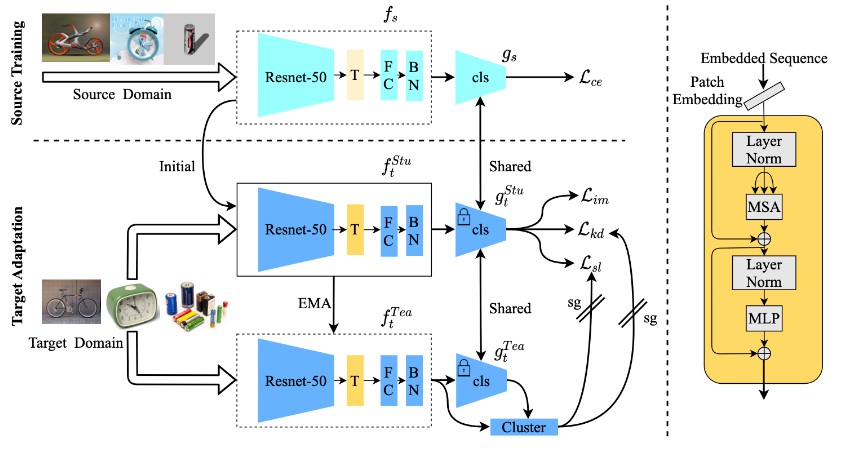

Official pytorch implement for “Transformer-Based Source-Free Domain Adaptation”.

Overview:

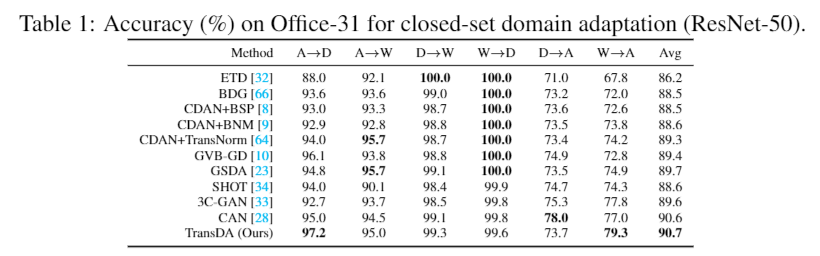

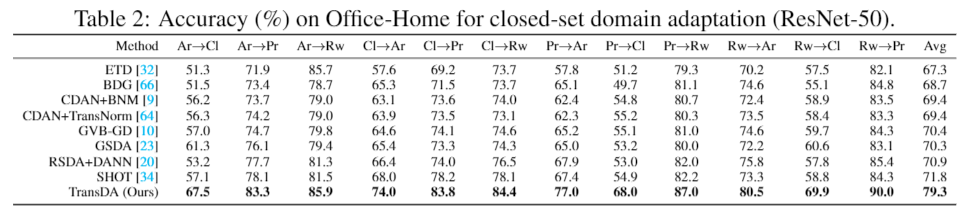

Result:

Prerequisites:

- python == 3.6.8

- pytorch ==1.1.0

- torchvision == 0.3.0

- numpy, scipy, sklearn, PIL, argparse, tqdm

Prepare pretrain model

We choose R50-ViT-B_16 as our encoder.

wget https://storage.googleapis.com/vit_models/imagenet21k/R50+ViT-B_16.npz

mkdir ./model/vit_checkpoint/imagenet21k

mv R50+ViT-B_16.npz ./model/vit_checkpoint/imagenet21k/R50+ViT-B_16.npz

Our checkpoints could be find in Dropbox

Dataset:

- Please manually download the datasets Office, Office-Home, VisDA, Office-Caltech from the official websites, and modify the path of images in each ‘.txt’ under the folder ‘./data/’.

- The script “download_visda2017.sh” in data fold also can use to download visda

Training

Office-31

```python

sh run_office_uda.sh

```

Office-Home

```python

sh run_office_home_uda.sh