Flexible neural models outperform grammar- and automaton-based counterparts on a variety of sequence modeling tasks. However, neural models perform poorly in settings requiring compositional generalization beyond the training data — particularly to rare or unseen subsequences...

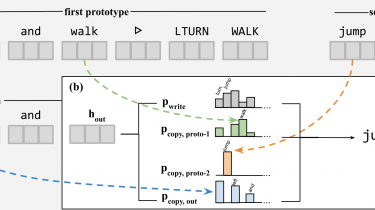

Past work has found symbolic scaffolding (e.g. grammars or automata) essential in these settings. Here we present a family of learned data augmentation schemes that support a large category of compositional generalizations without appeal to latent symbolic structure. Our approach to data augmentation has two components: recombination of original training examples via a prototype-based generative model and resampling of generated examples to encourage extrapolation. Training an ordinary neural sequence model on a dataset augmented with recombined and resampled examples significantly improves generalization in two language processing problems—instruction following (SCAN) and morphological analysis (Sigmorphon 2018)—where our approach enables learning of new constructions and tenses from as few as eight initial examples.

(under review)